When you deploy your API product on the edge, you’re no more than 50ms from almost all the internet-connected folks in the world.

It’s our favorite fact about an edge-optimized API, but not for the reasons you might think. Yes, sheer speed is great. You don’t have to worry so much about the latency between a data center in the U.S. and an API consumer in Japan. It’s like running a brick-and-mortar business and having a store in 100+ cities worldwide, where you’re globally present but retain the local personalized approach to selling… but many orders of magnitude faster.

But when you focus too much on speed, you overlook an even bigger value for your API business. The global reach and unique qualities of an edge network let you layer consistent policies and user-friendly experiences into a blindingly-fast handshake, giving you clearer paths to creating a viable business model around your API.

Let’s just put it this way: Thanks to global reach and local personalization, a lot can happen in just 50ms.

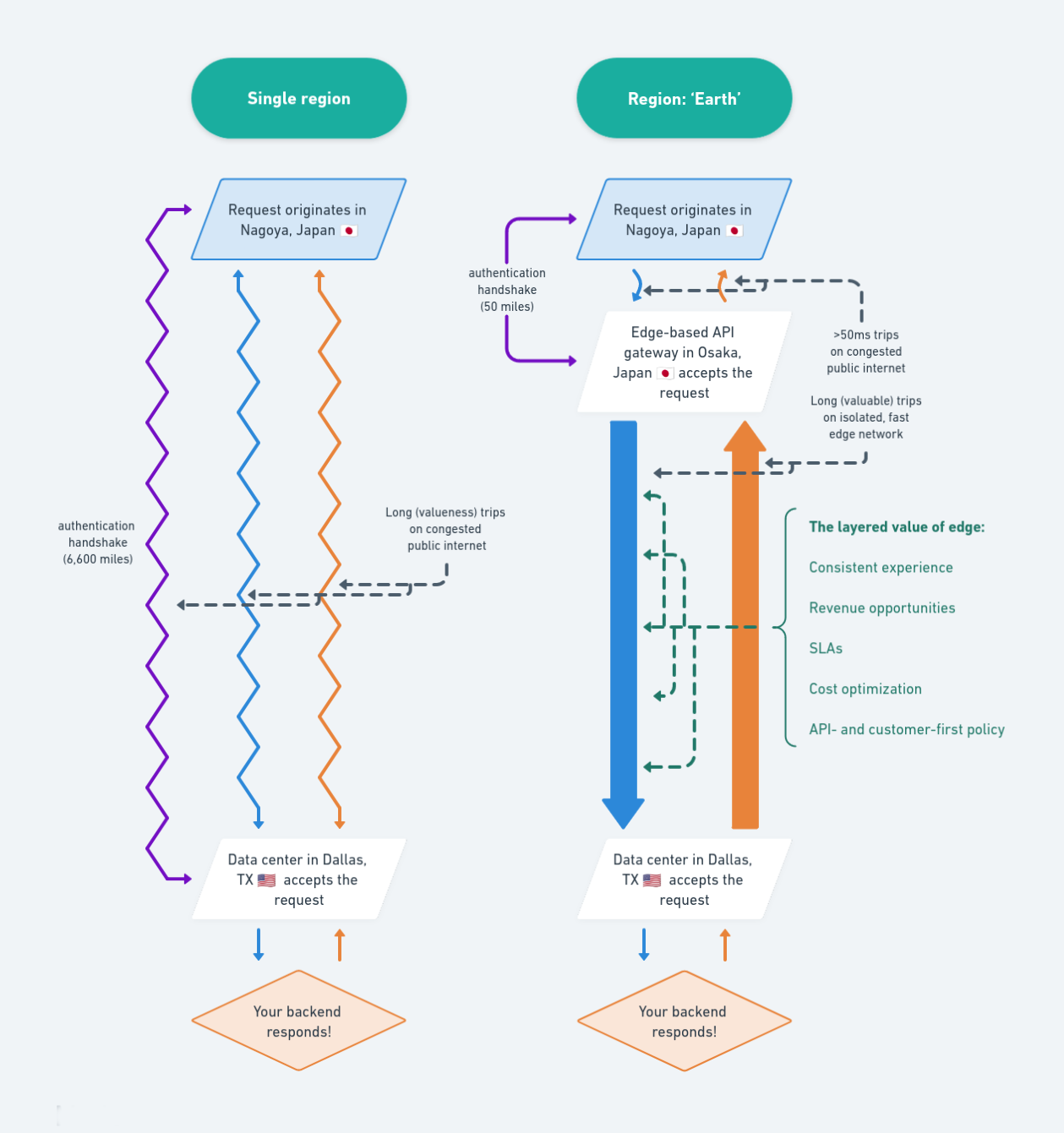

Two traces to an API product: Single region vs. ‘Region: Earth’

To show what that means, let’s trace the life of two requests: one for a traditional regional deployment of an API product, and another for an API as a business fully on the edge.

Single region

- The request originates in Nagoya, Japan.

- The request bounces through the congested backbone internet infrastructure toward its destination, 6,600 miles away: the single-region Google Cloud Platform data center you chose when you launched your API business, based in Dallas, Texas, USA.

- Your ingress, such as an API gateway, receives the request and:

- Performs an authentication handshake with the consumer, requiring more 6,600-mile round trips.

- Routes the request to your backend in the same data center.

- Your backend returns a response.

- The response traverses the long final leg of its trip, back across the Pacific Ocean, to reach your API consumer.

Data is fast, but that’s a notable journey in the wilderness of the public internet. For your API business, this distance-limited speed means everyone outside of North America, even those on the East or West Coast, is a second-class citizen for your API as a product. They’ll never get the same value as an API consumer in Austin.

You’re not a single-region deployment—you’re region-locked.

Our customers have experienced the pain caused by this distinction firsthand. One of them told us: “We were losing potential customers because of the additional latency between our services in the U.S. and their clients in Asia. It was too detrimental to their service, and they were more likely to go to an alternative service provider with a data center in APAC.”

The regional confinement of your API as a product gets even worse if you’re deploying your backend to multiple clouds, which creates additional hops between your ingress and backend service, stretching latencies even further.

You don’t have to be region-locked—you need to be willing to pay, in time, expertise, and licensing fees, for the privilege. You can add a region to your GCP deployment if you’re willing to nearly double your monthly bill to provide better service for one other group of API consumers. You could also stick with a single backend service, but host multiple API gateways, like Kong, on a global scale. You’ll pay the deployments and licenses and be responsible for managing the required Kubernetes clusters.

No wonder most single-region API product deployments stick to the basics: Fantastic speed for some, lackluster service for the rest of the globe.

Region: Earth

- The request originates in Nagoya, Japan.

- The request arrives at its destination, just 50 miles away: your edge deployment in Osaka, Japan.

- Your edge-based API gateway receives the request, then:

- Runs edge code to complete the authentication with short 50-mile round trips.

- Routes the request through the high-quality, uncongested edge network to your backend service.

- Your backend returns a response.

- The response travels back through the isolated network to your API consumer.

When you deploy your API product on the edge, there’s no picking regions. When you choose an API gateway like Zuplo that deploys to an edge network, like Akamai, Cloudflare, Fastly, or others, your ingress, load balancing, and API policies are automatically deployed in 300+ cities and 120+ countries. You’re close to your API consumers, whether they’re individuals moving about on their phones or businesses with servers in Azure.

The same client later said of their API product on the edge: “Having Zuplo’s gateway on top [of their deployed nodes in different locations] means the node can reside in any region in the world. We simply point the traffic to the closest point of presence and cache the most common data.”

Deploying your API gateway on “region: Earth” also means you’re multi-cloud adaptable—each gateway, running on hundreds of global points of presence (PoPs), can connect directly to individual cloud providers without additional hops or building out a DevOps team to run Kubernetes clusters. It’s one way to future-proof your API product as you build new features or acquire other API companies and integrate their tech into yours.

The many layers of >50ms

As an API-first company, you recognize that while speed matters, so does the way you organize, secure, and customize your API experience for consistency and quality. Only on the edge can you make so much happen in just 50ms.

Consistent experience

Speed is a foothold in customer experience, not the destination. Speed might get someone in the door, but consistency keeps them around and gives them the confidence to rely on your API product for their activities tied to revenue or end-user experience.

Your edge-based API will deliver consistent latency and availability no matter where your API consumer is now or will travel tomorrow. If they need to migrate their services from one cloud or region to another, you can guarantee that everything about how they interact with your API, and the speed of its responses, will remain the same.

Consistency is what gets free trial users to commit to a long-term plan.

Revenue opportunity

An edge network appends incoming requests with additional information, like the two-letter country code and the ASN of the cloud provider where it originated from. With this context and a programmable API gateway, you can customize pricing tiers, features, and rate limits based on every request’s geographic (or cloud provider) origin. They’ll be applied across all your edge PoPs, so your customers know exactly what they’re paying for and you deliver exactly what you promise.

For example, you can optimize pricing based on exchange rates, extending your reach while maximizing revenue opportunities. If you have a small number of heavy API users, you can create add-on packages that mitigate how much you spend processing their workloads. When an enterprise customer asks for an SLA, you can deliver.

You get customization, which lets you fearlessly approach new markets without sacrificing consistency.

SLAs

Speaking of SLAs—what happens if we continue the previous example, and your edge server in Osaka fails? Your edge network will automatically failover to the next closest PoP in Tokyo, Fukuoka, and Seoul, Korea. If your edge-based API goes down entirely, there are only two possible causes: 1) you shipped some faulty code and should perform a rollback ASAP, or 2) you’re subject to an internet-wide problem you can’t do anything about.

But those events are exceedingly rare. Edge networks like Cloudflare have SLAs with multiple 9s of guarantees on availability and responsiveness. You can confidently build your API company knowing you can pass these guarantees on to your customers.

Cost optimization

50ms is already fast, but the edge can do a whole lot faster with caching. With the edge, each PoP caches the most common responses, which lets you reach your API consumers in single-digit millisecond timeframes.

For your API business, the speed is only a knock-on benefit of real optimization. Each cached response delivered by your edge deployment saves your backend server from processing data and returning responses it has dealt with plenty of times already. Lower infrastructure cost lets you profit more from each request or reinvest those savings into other areas of your API business, like optimizing your developer documentation for a faster time-to-first-call.

API- and customer-first policy

Every successful API-first company has established and consistently applied strategies for authentication, documentation, protection, versioning, metrics, logging, and a whole lot more. These strategies guide your entire API lifecycle for consistency and predictability for your development.

For example, an edge network lets you take the same programmability around country codes and restrict requests coming from sanctioned countries, or use separate business logic that keeps your storage and usage of customer data compliant with the ever-changing landscape of data privacy laws like the California Privacy Rights Act (CPRA) or GDPR.

API key management protects your API from unauthorized usage, but it’s not trivial to configure and deploy yourself. With the right API gateway, like Zuplo, and an edge network, you can consistently layer encryption and authentication into your API… without hiring a DevSecOps team.

What’s next?

The fastest way to ship your API as a product to the edge is to start free with Zuplo. You can route requests to your existing backend, requiring no change to your infrastructure, to supercharge your API product in minutes.

To get you off on the right foot, here are a few of the popular and accessible ways to quickly leverage the power of the edge for your API business:

- Getting started on Zuplo: Learn how to ship your API to the edge in four key steps.

- API key authentication: Add consistent and DevOps-free authentication to API products for security, monetization, and more.

- Rate limiting: Programmatically control usage based on free/paid tiers, geography, origin ASNs, or anything available in our ZuploContext API.

- Developer Portal: Explore Zuplo’s functional developer documentation, automatically deployed with user-aware examples, schemas, and self-service API keys.

Loving the edge your API gets from the edge? Let us know what value you’re cramming into those crucial 50ms on Discord, Twitter/X, or Linkedin.